A Survey on Proactive Deepfake Defense: Disruption and Watermarking

Abstract

The rapid proliferation of generative AI has led to unprecedented capabilities in synthesizing realistic deepfakes across multiple modalities. This raises significant concerns regarding privacy, security, and copyright protection. Unlike passive detection approaches that operate after deepfakes have been created and distributed, proactive defense mechanisms aim to prevent the generation of malicious synthetic content at its source.

This paper provides a comprehensive survey of current proactive deepfake defense strategies, including Disruption and Watermarking. We analyze proactive approaches across various evaluation metrics (imperceptibility, protectability/detectability, transferability, traceability, and robustness), and examine their effectiveness in real-world settings. Furthermore, we review the evolution of deepfake generation techniques, highlighting their rapid developments. Finally, we identify key challenges and promising future research directions to enhance proactive defense mechanisms.

Motivation

While passive deepfake detection has been studied extensively, proactive defense remains underexplored. Three key motivations drive this research:

Limitations of Passive Detection

Passive approaches develop slower than new generation techniques, creating an ongoing arms race. They offer limited interpretability and only act after the damage is done — misinformation may have spread and privacy may already have been violated.

Misuse of Generative Models

Generative models can be exploited to create misinformation or harmful content that rapidly spreads online. These technologies become increasingly accessible to non-technical users through commercial applications and Machine-Learning-as-a-Service (MLaaS).

Intellectual Property Concerns

Generative models are increasingly vulnerable to model extraction attacks, where malicious actors extract substantial amounts of data or model parameters to train new models.

Key Insight: Proactive approaches address the deepfake problem at its source by implementing preventive measures during the content creation or distribution process, rather than detecting fakes after the fact.

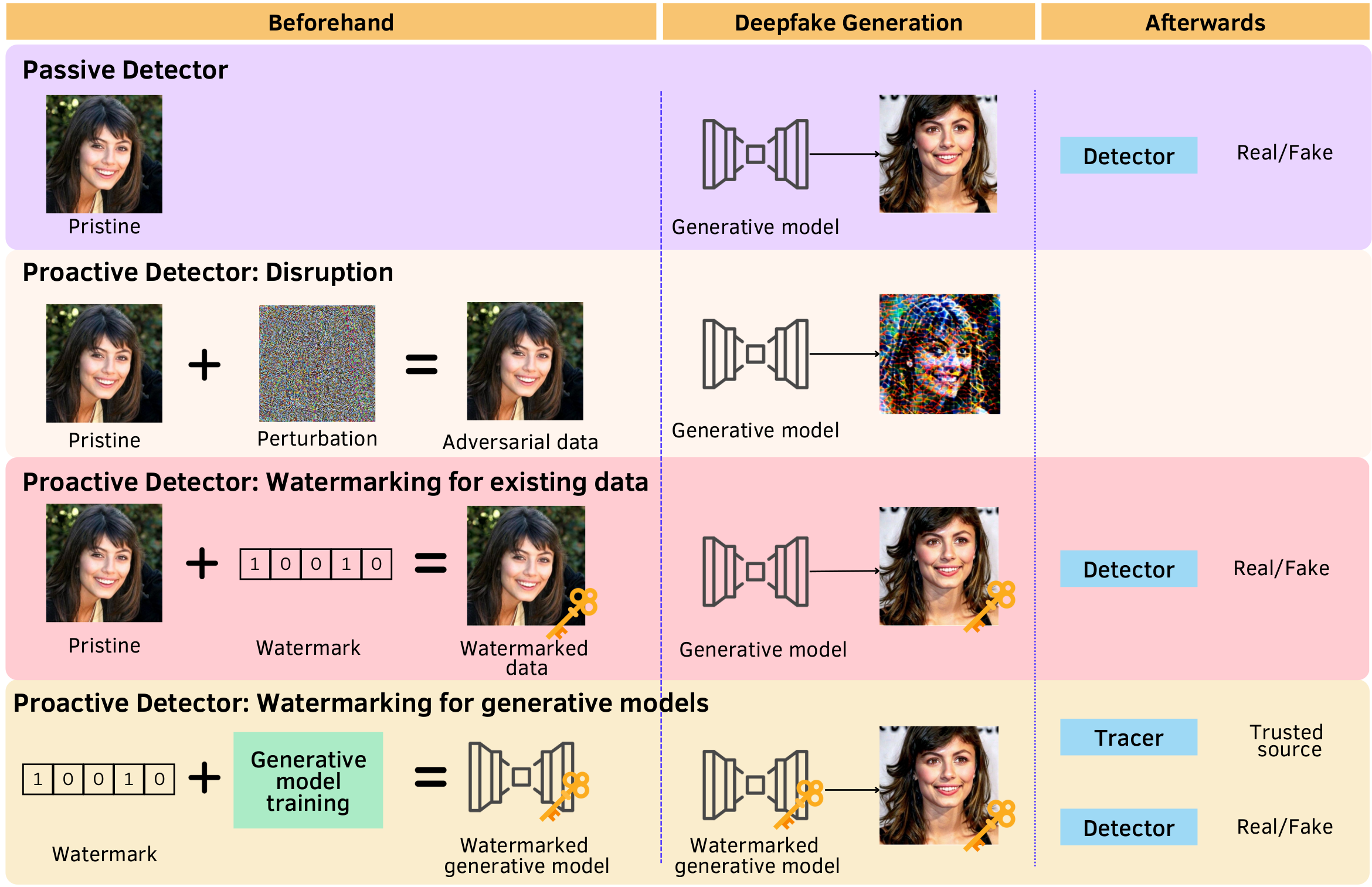

Taxonomy of Proactive Approaches

Proactive deepfake defense approaches can be divided into two main categories:

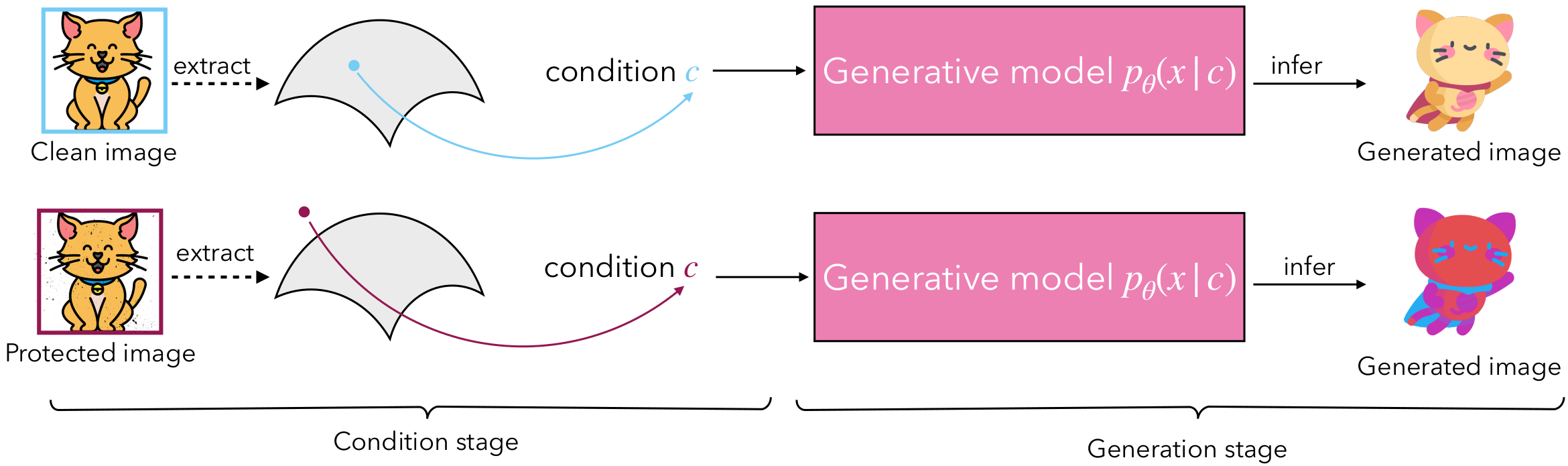

Disruption Approaches

Protect individuals' data by introducing imperceptible perturbations before sharing online, making it difficult for unauthorized parties to use this data for training or fine-tuning generative models. Disruption approaches are further divided into:

- White-box disruption: Error-maximizing optimization, error-minimizing optimization, learning-based, generative model-based, universal perturbation generation

- Black-box disruption: Surrogate model-based, query-based

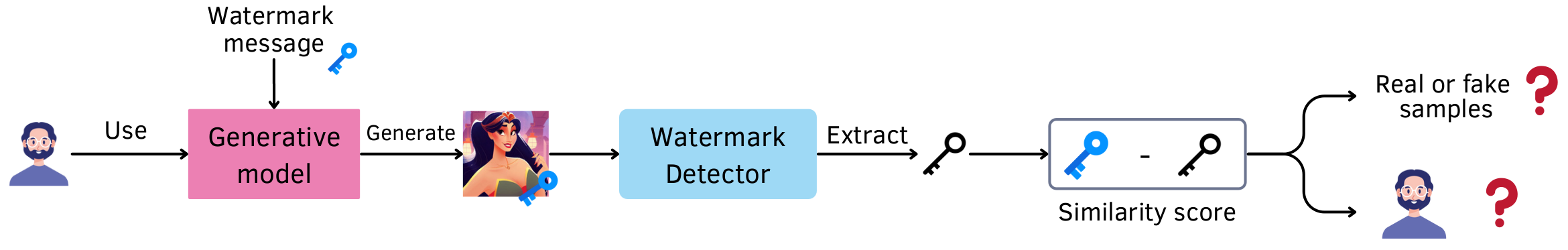

Watermarking Approaches

Embed verifiable messages (watermarks) into data or model outputs, enabling identification and attribution of the content source. Watermarking approaches are classified into:

- Watermarking for existing data: Visual data (encoder-decoder-based, generation-based, learnable) and audio data (continuous-based, discrete-based)

- Watermarking for generative models: Modifying model parameters or outputs

Evaluation Metrics

We identify and analyze critical evaluation metrics for effective proactive defense approaches:

Imperceptibility

Perturbations and watermarks must be visually and perceptually imperceptible to humans while still being effective in disrupting generative models or remaining detectable by watermark detectors.

Protectability & Detectability

The ability of disruption approaches to effectively prevent deepfake generation, and the ability of watermarking approaches to accurately detect watermarked content.

Transferability

Disruption perturbations should generalize across different generative models, even those with unknown architectures or parameters.

Traceability

Watermarking approaches should accurately attribute generated content to specific users or generative models through embedded watermark information.

Robustness

Protected samples and watermarked content must withstand post-processing techniques (e.g., compression), noise purification methods, and potential removal attacks.

Key Contributions

Comprehensive Taxonomy

A structured taxonomy of proactive approaches in both visual and audio modalities, analyzing disruption and watermarking techniques based on their underlying methodologies, advantages, and limitations.

Multi-dimensional Evaluation

Critical evaluation metrics analysis including protectability/detectability, imperceptibility, robustness, transferability, traceability, and real-world evaluation. Comprehensive analysis of threat models and attack vectors for both categories.

Evolution of Deepfake Generation

Analysis of deepfake generation evolution from traditional architectures (GANs, VAEs) to advanced systems (diffusion models, CLIP-based models) that produce nearly undetectable synthetic content, highlighting why proactive approaches are essential.

Challenges & Future Directions

Identification of key open challenges: robustness-imperceptibility trade-off, transferability limitations, traceability in multi-user scenarios, real-world evaluation gap, lack of multimodal defense frameworks, and watermarking limitations.

Challenges & Future Directions

Robustness-Imperceptibility Trade-off

Both disruption and watermarking approaches face fundamental trade-offs between protection effectiveness and content quality. Imperceptible perturbations are more vulnerable to noise purification attacks, while longer-bit watermarks degrade visual quality. Future work should explore frequency-domain watermarking and content-adaptive perturbations.

Transferability Limitations

Current disruption approaches struggle to generalize across diverse generative architectures. Each new architectural paradigm introduces fundamentally different generation processes. Future work should focus on model-agnostic perturbations that target fundamental fingerprints common across architectures.

Real-world Evaluation Gap

Limited testing in real-world environments with commercial generative AI systems creates uncertainty about practical effectiveness. Future work should establish standardized evaluation protocols incorporating diverse deployment scenarios and gray-box attacks.

Lack of Multimodal Defense Frameworks

Current approaches primarily target single modality attacks, creating a gap in defending against advanced multimodal deepfake generation. Future research should develop unified multimodal proactive defense frameworks that can simultaneously protect data across different modalities.

Citation

If you find this work useful in your research, please consider citing:

@article{nguyen2025survey,

title = {A survey on proactive deepfake defense:

Disruption and watermarking},

author = {Nguyen-Le, Hong-Hanh and Tran, Van-Tuan

and Nguyen, Thuc and Le-Khac, Nhien-An},

journal = {ACM Computing Surveys},

volume = {58},

number = {5},

pages = {1--37},

year = {2025},

publisher = {ACM New York, NY}

} Acknowledgments

This publication has emanated from research conducted with the financial support of Science Foundation Ireland under Grant number 18/CRT/6183.