Personalized Privacy-Preserving Framework for Cross-Silo Federated Learning

Abstract

Federated learning (FL) is recently surging as a promising decentralized deep learning (DL) framework that enables DL-based approaches trained collaboratively across clients without sharing private data. However, in the context of the central party being active and dishonest, the data of individual clients might be perfectly reconstructed, leading to the high possibility of sensitive information being leaked. Moreover, FL also suffers from the nonindependent and identically distributed (non-IID) data among clients, resulting in the degradation in the inference performance on local clients' data.

In this paper, we propose a novel framework, namely Personalized Privacy-Preserving Federated Learning (PPPFL), with a concentration on cross-silo FL to overcome these challenges. Specifically, we introduce a stabilized variant of the Model-Agnostic Meta-Learning (MAML) algorithm to collaboratively train a global initialization from clients' synthetic data generated by Differential Private Generative Adversarial Networks (DP-GANs). After reaching convergence, the global initialization will be locally adapted by the clients to their private data.

Motivation

Cross-silo federated learning faces two critical, intertwined challenges that existing methods address only in isolation:

Privacy Leakage from Dishonest Servers

In a semi-honest scenario, private information can be leaked from inversion attacks on updated gradients. In an active-and-dishonest scenario, the server can fully eavesdrop and modify the shared global model, setting up trap weights for recovering clients' data with zero reconstruction loss.

Non-IID Data Distribution

In cross-silo FL settings, clients (companies, institutions, hospitals) possess vastly different sizes and partitions of private data. This statistical heterogeneity leads to a decline in the performance of the aggregated global model, especially when a single model must serve all clients.

Key Insight: PPPFL is the first framework to integrate two critical FL research areas — privacy preservation and handling non-IID data — into a single cohesive solution, providing differential privacy guarantees while achieving improved convergence and personalized performance.

PPPFL Framework

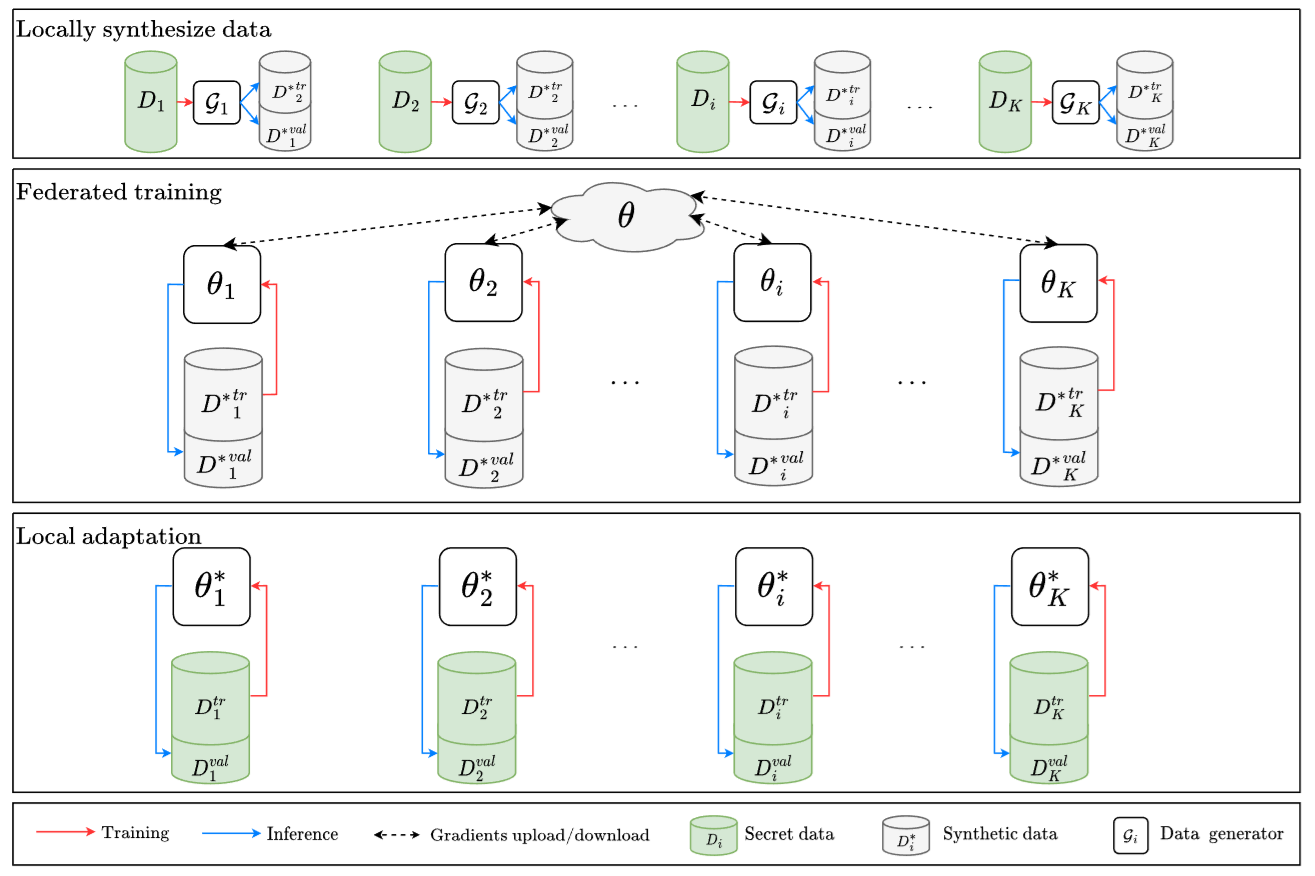

The proposed framework involves three consecutive stages:

Local Data Generation

Each client trains a differentially private data generator (based on DataLens) to synthesize artificial datasets. This ensures instance-level DP, providing stronger privacy guarantees than user-level DP. The original private data never leaves the client.

Federated Training

Clients use synthetic data for collaborative training via a stabilized MAML variant. The server meta-learns a generalized initialization θ from clients' feedback signals on synthetic data. Model EMA and Cosine Annealing Learning Rate prevent gradient instability.

Local Adaptation

After collaborative training, each client performs gradient descent updates on the well-initialized model using their secret private data, producing a personalized model θ* tailored to their local distribution.

Key Technical Innovations

DataLens Integration for Instance-level DP

We consolidate DataLens — a state-of-the-art DP data generative model based on the PATE framework — into FL. DataLens uses multiple teacher discriminators with top-k gradient compression, stochastic sign quantization, and calibrated Gaussian noise to generate high-utility synthetic data while maintaining rigorous privacy guarantees.

Stabilized MAML for Federated Training

The original MAML optimization is unstable due to multiple differentiations through the model. We propose a modified variant that employs Model Exponential Moving Average (EMA) in the inner loop and Cosine Annealing Learning Rate scheduling in the outer loop, enabling fast and stable convergence.

Personalized Privacy Budgets

Clients can independently select their preferred privacy budget ε and incorporate it into the DP-GAN training. The server integrates clients' privacy budgets via a softmax-weighted objective, improving model performance while respecting individual privacy requirements.

Main Results

PPPFL is evaluated on four benchmarks: MNIST, Fashion-MNIST, CIFAR-10, and CIFAR-100, with 5 clients and 30 communication rounds under non-IID settings.

Performance on DP-Synthetic Data (Server-Client FL)

| Method | MNIST | FMNIST | CIFAR-10 | CIFAR-100 | ||||

|---|---|---|---|---|---|---|---|---|

| BMT-F1 | BMTA | BMT-F1 | BMTA | BMT-F1 | BMTA | BMT-F1 | BMTA | |

| FedAvg | 0.9223 | 0.9051 | 0.7267 | 0.7938 | 0.4898 | 0.6562 | 0.2142 | 0.3028 |

| FedNova | 0.8773 | 0.8996 | 0.6303 | 0.7607 | 0.3847 | 0.6627 | 0.2855 | 0.3374 |

| FedProx | 0.9006 | 0.9040 | 0.6994 | 0.7721 | 0.4856 | 0.6718 | 0.3014 | 0.3404 |

| SCAFFOLD | 0.8906 | 0.9024 | 0.6698 | 0.8061 | 0.3732 | 0.6614 | 0.2096 | 0.3333 |

| FedMeta | 0.9399 | 0.9616 | 0.8367 | 0.8800 | 0.6897 | 0.7466 | 0.2737 | 0.4133 |

| PPPFL (ours) | 0.9420 | 0.9640 | 0.8622 | 0.9199 | 0.7163 | 0.8000 | 0.3526 | 0.4800 |

Comparison to Decentralized FL Frameworks

| Method | MNIST | FMNIST | CIFAR-10 | CIFAR-100 |

|---|---|---|---|---|

| AvgPush | 0.9872 | 0.8900 | 0.6071 | 0.2975 |

| ProxyFL | 0.9870 | 0.8942 | 0.6292 | 0.3144 |

| Dis-PFL | 0.9000 | 0.9333 | 0.8380 | 0.2612 |

| PPPFL (ours) | 0.9640 | 0.9199 | 0.8000 | 0.4800 |

Key Findings

Superior Accuracy with Privacy Guarantees

PPPFL outperforms all server-client FL baselines (FedAvg, FedNova, FedProx, SCAFFOLD, FedMeta) across all four benchmarks, achieving up to 8.0% improvement in BMT-F1 on CIFAR-100 over the second-best method.

Stabilized Convergence

The original MAML diverges after a few communication rounds. The stabilized MAML variant with Model EMA and Cosine Annealing LR achieves fast convergence within 10 rounds, validated on CIFAR-10.

Privacy-Utility Trade-off

Higher privacy budgets (ε) yield better model accuracy and synthetic data quality (lower FID). PPPFL demonstrates a strong correlation (Spearman's ρ = −0.90) between local model performance and collaboration gains, providing natural incentives for protocol participation.

Citation

If you find this work useful in your research, please consider citing:

@article{tran2024personalized,

title = {Personalized Privacy-Preserving Framework

for Cross-Silo Federated Learning},

author = {Tran, Van-Tuan and Pham, Huy-Hieu

and Wong, Kok-Seng},

journal = {IEEE Transactions on Emerging Topics

in Computing},

volume = {12},

number = {4},

pages = {1014--1024},

year = {2024},

publisher = {IEEE}

} Acknowledgments

This work was supported by VinUni-Illinois Smart Health Center (VISHC), VinUniversity.